3.5 gigawatts: what does that actually mean?

A gigawatt of computing power is not a unit we are used to working with. For comparison: the entire Czech Republic consumes an average of about 6 gigawatts of electricity. Anthropic has therefore ordered infrastructure that corresponds energetically to more than half of our entire country's consumption — and that's just for training and operating AI models.

Specifically: in 2026, Broadcom will supply Anthropic with 1 gigawatt of TPU power via Google cloud infrastructure. For 2027, this number is expected to increase to more than 3.5 gigawatts. Broadcom CEO Hock Tan personally confirmed this, stating: "We are off to a very good start for Anthropic in 2026."

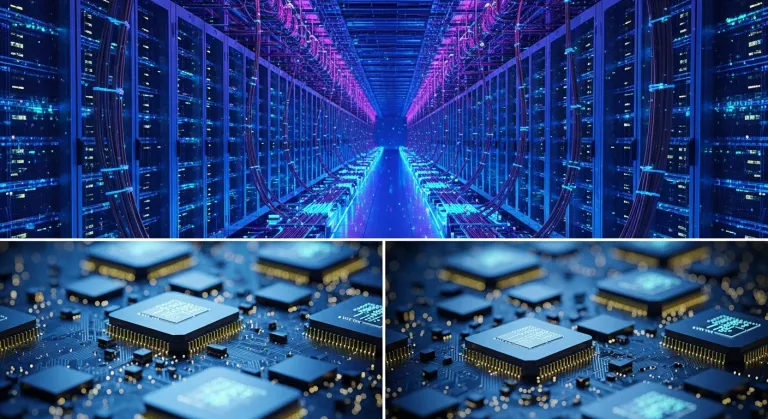

TPUs (Tensor Processing Units) are specialized chips designed directly by Google for working with neural networks. Unlike NVIDIA GPUs, they are optimized specifically for the matrix operations that AI models use. The advantage: when used correctly, they are significantly more efficient and cheaper to operate.

Revenues that astonished analysts

Along with the infrastructure deal, Anthropic released financial figures that shook the industry. Run-rate revenue — the annual income derived from the current pace — exceeded $30 billion. At the end of 2025, this figure was around $9 billion. A threefold increase in less than four months.

Even more telling is the look at enterprise customers. The number of companies spending more than $1 million annually on Claude API and enterprise products surpassed one thousand — and yet in February 2026, there were "only" over 500. A doubling in less than two months.

Anthropic CFO Krishna Rao commented succinctly: "We are making the largest computing commitment in the company's history to keep pace with unprecedented growth."

Broadcom as a key player — and what it gets out of it

Broadcom plays the role of an intermediary in this triangle: it designs and coordinates the production of its own ASIC chips (specifically Google TPUs), with Google procuring and operating them in its data centers. Anthropic then uses these capacities via Google Cloud.

Analysts at Mizuho estimate that Broadcom will earn approximately $21 billion from its partnership with Anthropic in 2026, and up to $42 billion in 2027. This is one of the most significant individual customer relationships in Broadcom's history.

Diversification as a strategic safeguard

An interesting detail: Anthropic is not building its entire future on a single chip supplier. Claude models are trained and operated in parallel on three platforms:

- Google TPU — via Google Cloud, the new mega-deal

- AWS Trainium — Amazon's own AI chips via Amazon Web Services

- NVIDIA GPU — the industry standard for AI computing

Thanks to this, Anthropic is the only frontier AI model available on all three largest cloud platforms in the world — AWS, Google Cloud, and Microsoft Azure (through partnerships). This triple presence is intentional: it ensures resilience to outages, the ability to optimize prices, and the ability to scale quickly where capacity is available.

Context: $50 billion commitment and US AI infrastructure

The new agreement is not an isolated step. It follows a commitment Anthropic signed in November 2025 — to invest $50 billion in US AI infrastructure. At that time, a partnership was formed with the British company Fluidstack, and data centers in Texas and New York were announced, coming online throughout 2026.

The new TPU capacities from Google and Broadcom extend this commitment into 2027 and beyond. The vast majority of the new infrastructure will be physically located in the United States — partly for economic reasons, partly as a response to pressure from the US government to build domestic AI capacity.

What this means for Claude users — including those in the Czech Republic

Claude is fully available in the Czech Republic — both as a web application at claude.ai and as an API for businesses. A Czech localization of the interface exists, and the model natively understands Czech and responds in Czech at a solid level.

The massive increase in computing capacities should primarily manifest in three areas:

- Response speed — less waiting during peak times, better responsiveness for API customers

- Availability of more powerful models — greater capacity allows for deploying more expensive models for more users

- Price — with more efficient infrastructure utilization, there is room for reducing API prices

For Czech and Slovak companies that use Claude API in customer care, automation, or data analysis, stable and growing infrastructure is directly relevant. The more capacity, the lower the risk of outages or limitations during a sudden surge in demand.

The chip race: Anthropic vs. OpenAI vs. others

Anthropic is not alone in building infrastructure. OpenAI is investing in Project Stargate — $500 billion data centers in partnership with Microsoft, SoftBank, and Oracle. Google DeepMind operates its own TPU clusters. Meta is building hundreds of thousands of GPUs for internal use.

This race for computing capacity is not just technological — it is a strategic battle over who will be able to train the most powerful next-generation models. Without sufficient infrastructure, even the best research team cannot reach frontier models. That's why gigawatts are not just numbers in newspaper headlines — they are tickets to the AI premier league.

Why does Anthropic need so much power — isn't less enough?

Training and operating frontier AI models like Claude requires enormous computing capacity. Each new generation of the model is orders of magnitude more demanding than the previous one. Additionally, Anthropic must handle concurrent queries from millions of users and thousands of enterprise customers in real-time — this requires continuous capacity availability regardless of peaks.

What are TPU chips and why does Anthropic choose them instead of NVIDIA GPUs?

TPUs (Tensor Processing Units) are chips designed directly by Google for matrix operations typical of neural networks. They are not a replacement for GPUs — Anthropic uses both. TPUs offer high efficiency and lower costs for specific types of computations, while NVIDIA GPUs are more versatile. A diversified strategy reduces dependence on a single supplier and optimizes costs.

Does this agreement mean that Anthropic is de facto part of Google?

No. While Google is one of Anthropic's largest investors, the company remains independent. The agreement with Google and Broadcom is a commercial partnership — Anthropic pays for computing capacity. At the same time, Anthropic collaborates with Amazon (AWS) and other providers precisely to maintain its independence and negotiating position.